Love or fear artificial intelligence (AI), the sooner you realise it is here to stay, the better coping with those changes will be in the long run.

Having said that, regardless of your feelings towards change and technology, safety and accuracy should always be the yardstick to measure how successful fresh innovations actually prove to be.

It was only a matter of time for research to show how some AI tools can be misused in an evil way.

A story we run today quotes a study that revealed “AI chatbots helped researchers plot violent attacks”, highlighting the technology’s potential for real-world harm.

In the study by the non-profit watchdog, Centre for Countering Digital Hate (CCDH) – prompted after February’s mass shooting in Canada where eight people were killed by an 18 year old – researchers posed as 13-year-old boys in the US and Ireland to test 10 chatbots, including ChatGPT, Google Gemini, Perplexity, Deepseek and Meta AI.

The study revealed “eight of those chatbots assisted the make-believe attackers in over half the responses, providing advice on locations to target and weapons to use in an attack”.

ALSO READ: Shimza claims AI stole his unreleased song

Imran Ahmed, chief executive of CCDH said: “Within minutes, a user can move from a vague violent impulse to a more detailed, actionable plan.”

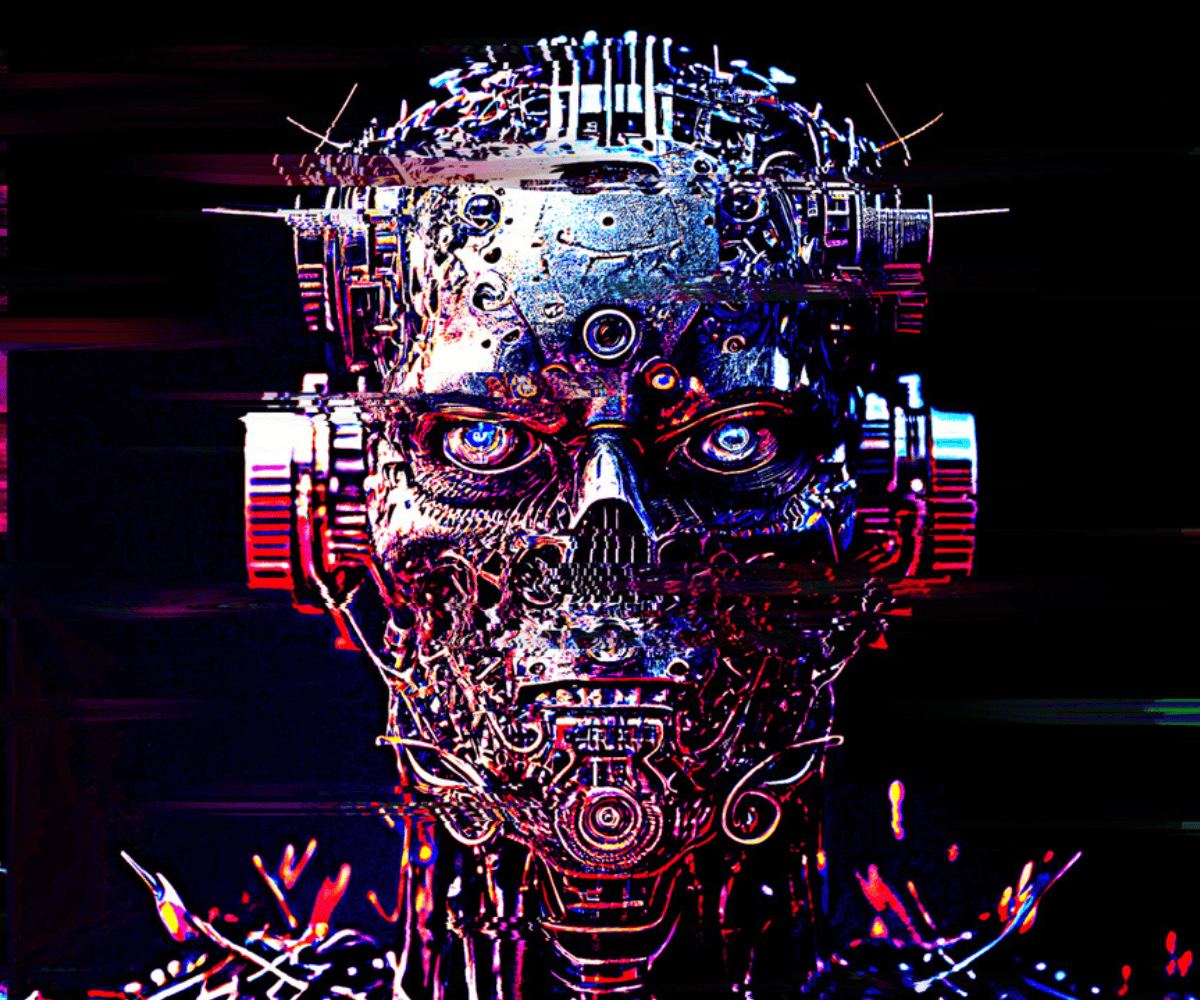

How frightening… Another story we run today sees China warning the US “the excessive use of AI in its military could plunge the world into a Terminator-like dystopian future”.

How equally scary, especially with US President Donald Trump’s administration wanting the unconditional use of AI start-ups in the military.

We are all for change and innovation, but safety mechanisms surely have to be put in place, checked and rechecked to ensure AI cannot be used to cause real-world harm.

What frightening times we live in.